The rise of generative artificial intelligence has pushed audiovisual manipulation to an unprecedented level. Today, anyone can produce synthetic videos and photos that are nearly indistinguishable from reality. This has created a scenario where trust in what we see is rapidly eroding, affecting everything from daily life to national security.

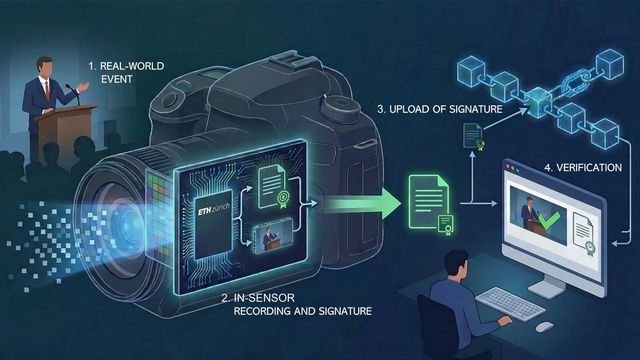

In response to this challenge, a group of engineers has developed a technology that could redefine digital authenticity: camera sensors capable of generating a cryptographic fingerprint at the very moment light hits the sensor. A physical—not just software-based—solution designed to close the door on visual manipulation.

🧩 The Problem: Visual Truth Is in Crisis

Generative AI has democratized the creation of synthetic content. This brings two simultaneous risks:

- People believing fake content because it confirms their biases.

- People dismissing real content by claiming “it’s probably AI.”

Both phenomena feed an ecosystem where visual evidence stops being evidence. In social networks, armed conflicts, legal proceedings, or political campaigns, the ability to manipulate images and videos threatens informational stability.

Current digital certification standards—such as cryptographic signature systems built into cameras and smartphones—are useful but not foolproof. Their main weakness: the signature occurs far from the sensor, leaving room for the signal to be intercepted or altered before it is certified.

🔒 The Innovation: Authenticity Guaranteed From the Sensor Itself

The new technology proposes a radical shift: integrating cryptographic circuits directly next to the sensor’s pixels. This enables:

- Generating a unique mathematical fingerprint at the exact moment of capture.

- Locking that fingerprint with a private key physically embedded in the microchip.

- Ensuring that any later alteration breaks the signature, making it impossible to modify the content without leaving traces.

- Allowing anyone to verify authenticity using a public key registered in immutable systems, such as blockchain.

In other words:

authenticity stops depending on software and begins depending on hardware, an environment far more difficult to tamper with.

🏭 The Challenge: Bringing This Technology to Market

Although the concept is solid, mass adoption faces several challenges:

- It requires new sensor manufacturing lines, not just software updates.

- It implies additional costs for camera and smartphone manufacturers.

- It demands a global agreement to standardize content verification.

However, the cost of not doing so could be even higher: a digital ecosystem where visual truth disappears entirely.

🌐 Why This Technology Matters for Businesses, Governments, and Users

Audiovisual authenticity is not just a technical issue; it is a social, economic, and security issue. Some of the most relevant impacts include:

- Preventing fraud and extortion based on deepfakes.

- Protecting audiovisual evidence in investigations and legal processes.

- Mitigating disinformation campaigns in political or geopolitical contexts.

- Increasing trust in corporate content, especially in sectors like healthcare, finance, or security.

- Protecting minors and vulnerable communities from image manipulation.

For organizations like Cristosoft, which promote digital education and technological security, this type of advancement represents an opportunity to drive better practices and raise awareness among users and companies.

🧭 Conclusion: Recovering Certainty in the Age of AI

Visual verification can no longer rely solely on good faith or software tools. The magnitude of the problem demands structural solutions. Cryptographic sensors represent a decisive step toward a digital ecosystem where we can clearly distinguish what is real from what is fabricated.

In a world saturated with synthetic content, authenticity will become a strategic value. And the technology capable of guaranteeing it from the source will be key to restoring digital trust.